One Year After the First AI-Powered University: Everyone Uses AI, Now What?

On April 1, 2026 California State University released the report Ahead of the Curve: What the Nation’s Largest Public University System is Learning About AI. The report presents to the public the results of a comprehensive survey that a team from CSU San Diego conducted in the fall of 2025. With 94,060 respondents spanning students, staff, and faculty it is quite impressive.

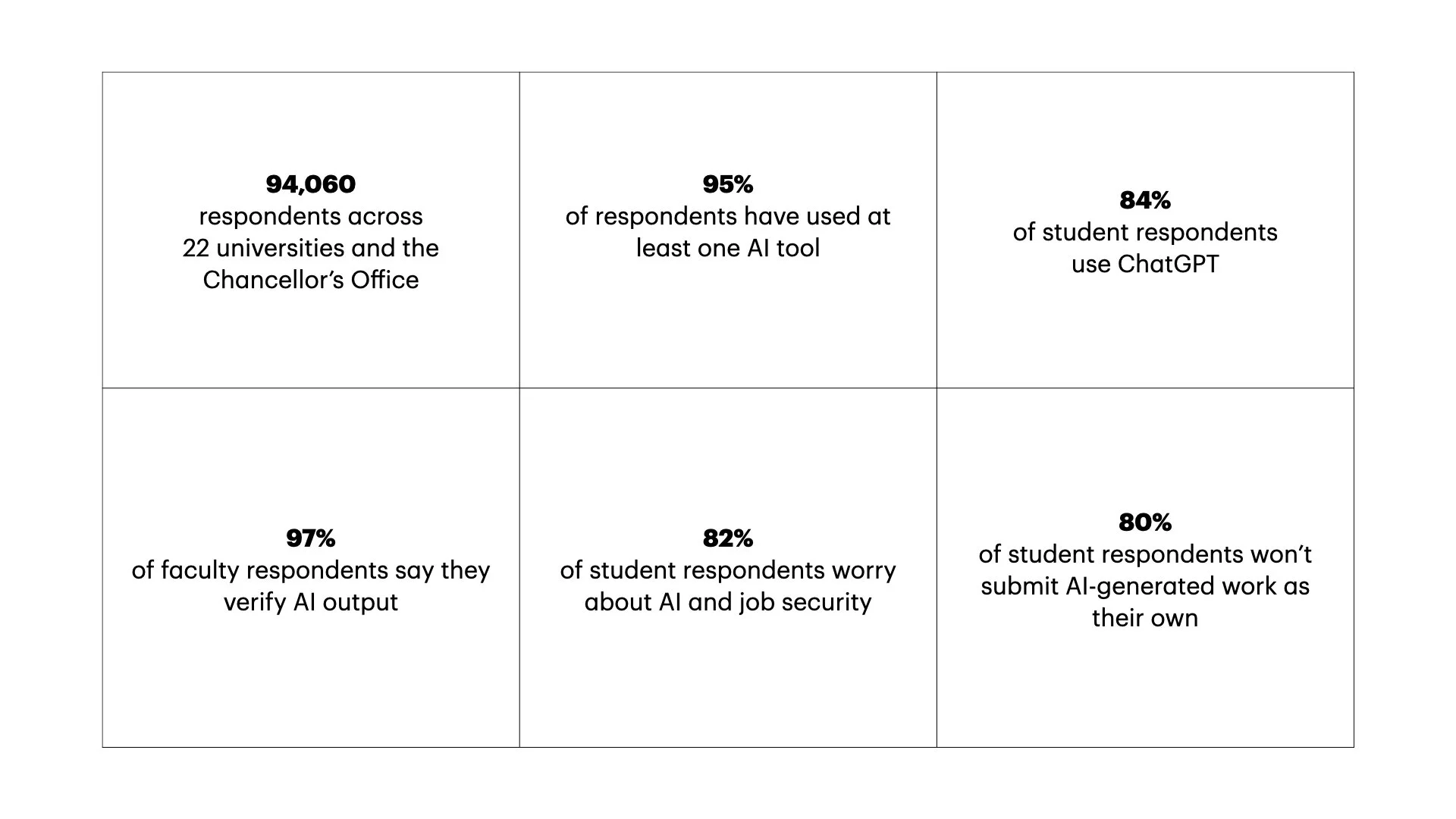

Here is the quick fact sheet the report provides:

The report sparked a wave of media as it was a good story: we’re all wondering “what are students doing with this technology?” “Did the CSU actually mess up when they signed this deal?” “Has ChatGPT ushered in a golden age of productivity?” “Are we all going to be out of a job soon?” You know those kinds of questions.

The Los Angeles Times ran a good story that not only details the results but surveys opinions of students, staff (turns out they really like ChatGPT), and faculty–who not unsurprisingly are the most divided population on whether the deal with OpenAI is good or bad (wink, can it be both?).

Zach was interviewed for this story and he sums up the responses nicely:

We still have people that want to pretend this doesn’t exist. We still have people that are adapting and doing amazing work in real time. And we have people that would prefer to keep it out of their classrooms,” Justus said. “What I always tell faculty is, ‘Don’t outsource the thing that you love.’ If you love reading and then creating visuals for a complex article, great, keep doing that. But if that was the thing that you hated doing and weren’t good at, then you can get some help with that.

A year ago, when the CSU cut the deal to put ChatGPT in everyone’s pocket, in Thoughts on the First AI-Powered University I argued the story wasn’t AI–since people would use it anyway–it was access leading to normalization. Flip the switch systemwide and we move from chaotic, uneven, and gradual adoption to a phase change. That’s more or less exactly what happened. The CSU survey shows astonishing 95% usage. The experiment is over. AI is not coming to higher ed, it’s already ambient, much like the electricity analogy I’ve used before.

I also argued that once AI is everywhere, the cheating discourse collapses. That’s only half right. In practice, everyone is using it. In rhetoric, almost no one is willing to say they are crossing the line—80% of students say they wouldn’t submit AI work as their own. Whether you read that as integrity or performance, the important point is this: the norms didn’t crystalize, they got weird.

What I got right is that AI would expose the fiction of scarcity that higher ed runs on. Students are very explicit about what they’re doing: using AI as a tutor, a feedback engine, a way to get unstuck at 3AM. In other words, they are building the support structures the institution doesn’t have the capacity to provide.

What I underestimated is how quickly this would become normal if not still underground. Just… part of life. People use AI the way they use Google Docs or email.

I ended the piece with some speculation writing,

Does this vision position CSU as frontier leader or hasten its irrelevance in the long run as AI takes over more and more core functions of the university? The CSU is making a bet. We won’t know for some time how it pays off. In the meantime, it is safe to say that generative AI will officially be in the mix of everything we all do at the university.

While the survey supports my assertion that generative AI is becoming ambient technology, the survey doesn’t yet answer the first question about the implications of this phase shift. For that, we need more time.

And this is where the system breaks into a slow, visible incoherence. Faculty policies are all over the place: encourage, forbid, ignore, require. Students adapt anyway. Staff move the fastest of all. Like all technology, users ultimately decide what AI is for, not the institution. This will be alarming to some and liberating for others.

So the question I ended with last year still stands, but it is even more potent now: if AI can do more and more of our work, what exactly is the work we think is still ours?